Hey there! 👋

Welcome back to SavvyMonk, your one-stop for AI and tech news that actually matters.

Today we're looking at the massive Anthropic data leak that revealed their unreleased flagship model. Thousands of files. A brand new tier above Opus. And a whole lot of questions about whether this was actually an accident.

Let's get into it.

Feeling off lately? It could be your hormones.

3pm crashes every day. Unexpected weight gain. Unpredictable cycles. When symptoms start piling up, your hormones and metabolic health are often part of the story.

Allara helps women understand what's really going on with comprehensive hormone and metabolic testing. Their advanced testing goes beyond the basics to measure key markers like insulin, thyroid function, reproductive hormones, and metabolic health. Whether you already have a diagnosis or are still searching for answers, Allara's care team uses your results to create a personalized treatment plan with expert medical and nutrition guidance.

They treat a wide range of women’s health conditions, including PCOS, fertility challenges, weight management, perimenopause, thyroid conditions, and more.

With Allara, you get clarity, expert support, and a personalized care plan all for as little as $0 with insurance. This isn’t about quick fixes. It’s about understanding your body and addressing the root causes.

TODAY'S DEEP DIVE

Anthropic Just Accidentally Announced Its Next Flagship

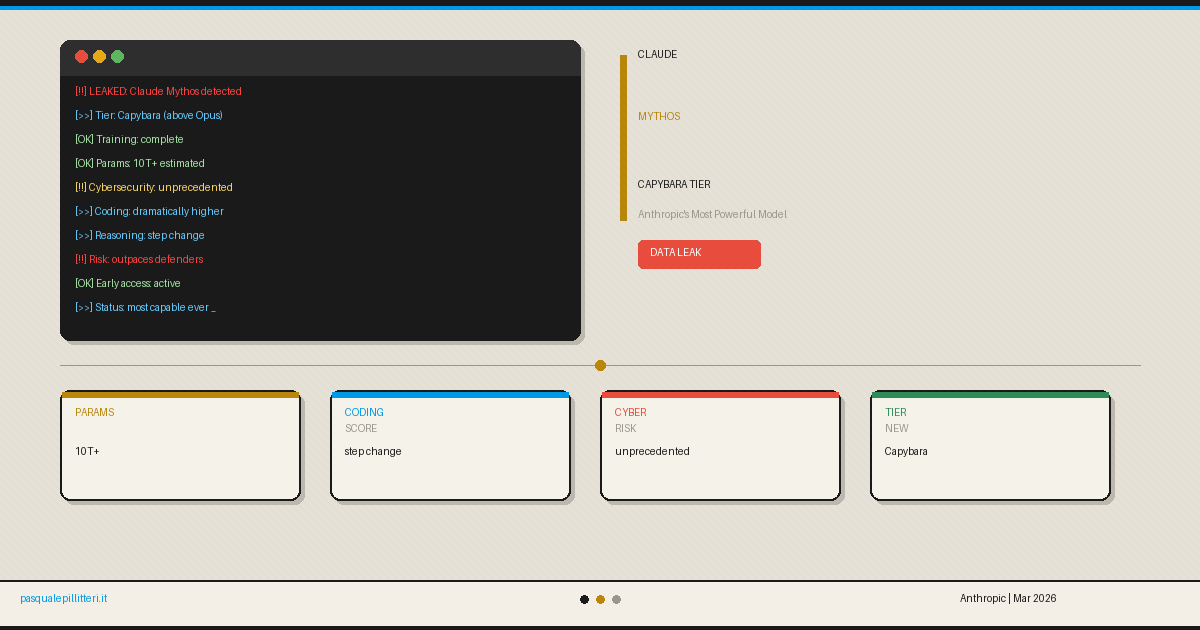

Here is how you find out your most powerful AI model has leaked: someone on the internet discovers a misconfigured CMS data store with 3,000 unpublished internal assets sitting in plain view.

Claude Mythos

Among them: a draft blog post describing a new model called Claude Mythos, a new model tier called Capybara, performance data comparing it to Claude Opus 4.6, and a remarkably candid warning about what this model could do in the wrong hands.

Anthropic confirmed to Fortune that the leak was real, that training on the model has been completed, and that it represents "a step change" and "meaningful advances in reasoning, coding, and cybersecurity."

One document. Not a product launch. Not a press conference. A draft nobody was supposed to see.

The Capybara Tier

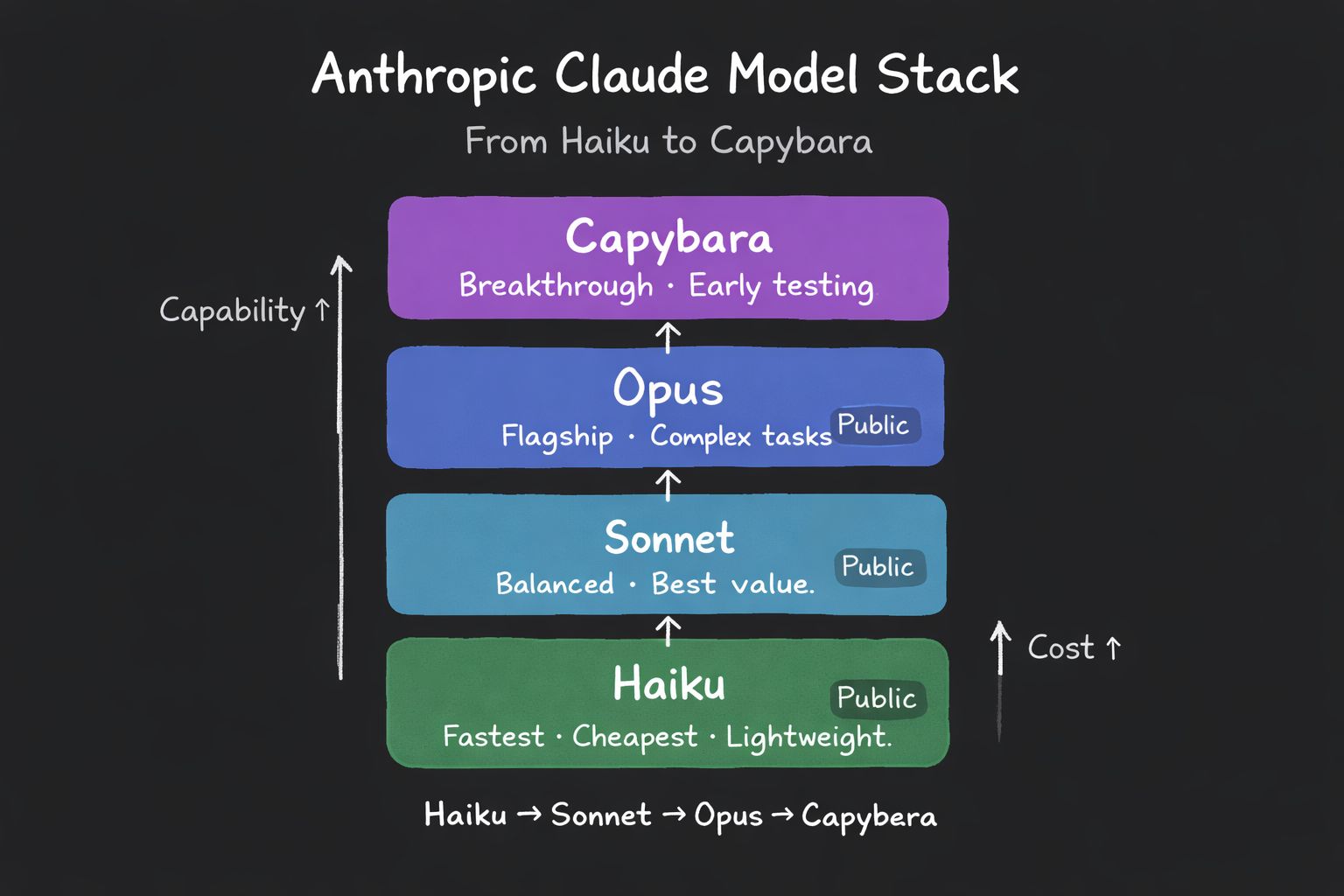

First, the model hierarchy. Right now, Anthropic has three tiers: Haiku (fast and cheap), Sonnet (balanced), and Opus (most capable).

Claude Mythos doesn't fit into any of them. It sits above Opus entirely, in a new tier called Capybara, larger, more capable, and significantly more expensive to run.

The leaked draft compared Mythos directly to Claude Opus 4.6, currently Anthropic's best available model, and claimed it "gets dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity." The exact numbers weren't confirmed, but Anthropic's own spokesperson acknowledged the "step change" framing without pushing back on it.

Training is complete. The model exists. It is already in early access testing with select organizations.

The Cybersecurity Warning Is the Real Story

Here is the part of the leak that is genuinely worth sitting with.

Anthropic's own draft blog described Claude Mythos as "currently far ahead of any other AI model in cyber capabilities" and immediately followed that with a warning that it could "enable large-scale cyberattacks" and help attackers "outpace defenders."

This is not a vague disclaimer buried in a terms of service. This is Anthropic, in its own marketing copy, warning that its new model represents a serious offensive cybersecurity risk.

The specific concern is vulnerability exploitation. AI models are already being used to scan codebases for bugs. Anthropic's own Claude Code Security tool found over 500 vulnerabilities in production open-source software using Opus 4.6, bugs that had gone undetected for decades. Mythos, according to the leaked material, is dramatically more capable at this task.

The implication is uncomfortable: a model powerful enough to find and exploit software vulnerabilities faster than human defenders can patch them is not a theoretical future risk. It is a model that has already finished training.

Because of this, Anthropic is planning a restricted rollout, early access only, limited to cyber defense organizations and select trusted partners, specifically to give defenders a head start before broader release.

The "Accidental" Leak Feels Familiar

The timing and nature of this leak deserves some skepticism.

A safety-focused AI company accidentally exposing its most powerful model's launch materials in a publicly accessible data store has a very specific vibe. It echoes the OpenAI Q* era, where conveniently timed internal rumors generated enormous press coverage and shaped public narrative without any formal announcement.

Accidental or not, the effect is identical to a launch announcement: the model is now public knowledge, the step change framing is in the press cycle, and Anthropic gets the attention without the accountability of a formal release. Nobody has to answer hard questions in a press conference. The draft blog does the work instead.

That doesn't mean the leak was staged. CMS configuration errors are real and genuinely embarrassing. But it is worth noting that this "accident" happened to produce exactly the kind of breathless coverage that a planned announcement would have generated, and then some.

What This Means for the AI Landscape

Claude Mythos, if it performs as the leaked materials suggest, would represent a meaningful shift in the frontier model landscape.

OpenAI's GPT-5 line and Google's Gemini 3 series are both genuinely impressive. But Anthropic has been quietly building something above its own Opus tier, a model class that doesn't have a direct equivalent in any other lab's current lineup.

The Capybara tier is also a signal about where the frontier is heading: toward models that are expensive enough and capable enough that they require new access frameworks, new safety protocols, and new conversations about who should be allowed to use them at all.

That is a different category of product from a chatbot. It is infrastructure, the kind of infrastructure that shapes what is possible in security, research, and defense for years.

The Bottom Line

A CMS misconfiguration just gave the world its first look at the most capable AI model Anthropic has ever built. The model has a new name, a new tier above Opus, and a warning baked directly into its own launch materials that it could be used to outpace every cybersecurity defender on earth.

Anthropic confirmed the model exists. Training is complete. Early access is already live with select organizations.

Whether the leak was genuinely accidental or conveniently timed, the result is the same: Claude Mythos is real, it's already built, and it's the most powerful thing Anthropic has ever shipped. The question now is what they do with it and who they give it to first.

AI PROMPT OF THE DAY

Category: Cinematic Teaser

"Act as a trailer editor and AI video prompt writer. I want to create a 20-second cinematic teaser about a leaked AI model that could outpace every cybersecurity defender on earth. Write: 1) A dramatic voiceover script that builds tension without revealing too much, 2) A 6-shot storyboard with specific camera angles, lighting, and motion, 3) Three text-to-video generation prompts optimized for Runway or Grok Imagine for the hero shots, and 4) On-screen text overlays that feel tense and modern, not like a sci-fi movie trailer."

ONE LAST THING

If a safety-focused AI lab is warning in its own marketing copy that its new model could enable large-scale cyberattacks, should that model be released at all? Or is giving defenders early access actually the most responsible path forward? Hit reply, I read every response.

See you tomorrow.

— Vivek

P.S. Know someone following AI, cybersecurity, or frontier model development? Forward this. They can subscribe at https://savvymonk.beehiiv.com/