Hey there! 👋

Welcome back to SavvyMonk, your one-stop for AI and tech news that actually matters.

Anthropic just dropped findings from what it says is the largest qualitative study on AI attitudes ever conducted. Over 80,000 Claude users. 159 countries. 70 languages. One week. And the whole thing was run by an AI interviewer.

Let's get into it.

Tech moves fast, but you're still playing catch-up?

That's exactly why 200K+ engineers working at Google, Meta, and Apple read The Code twice a week.

Here's what you get:

Curated tech news that shapes your career - Filtered from thousands of sources so you know what's coming 6 months early.

Practical resources you can use immediately - Real tutorials and tools that solve actual engineering problems.

Research papers and insights decoded - We break down complex tech so you understand what matters.

All delivered twice a week in just 2 short emails.

TODAY'S DEEP DIVE

What 80,000 People Actually Think About AI and What Anthropic Learned by Asking

Back in December 2025, Anthropic launched a tool called Anthropic Interviewer, a specially prompted version of Claude designed to conduct open-ended, adaptive conversations with real people. Think of it as a qualitative researcher that never gets tired and speaks 70 languages.

The tool wasn't a checkbox survey. It asked users what they use AI for, what they dream it could do, and what scares them about where things are headed. Then it followed up based on responses, probing deeper the way a good human interviewer would.

Over one week, 80,508 people across 159 countries sat down for these conversations. Anthropic says it's the largest and most multilingual qualitative study ever conducted.

The Big Finding: Fear of AI Getting It Wrong

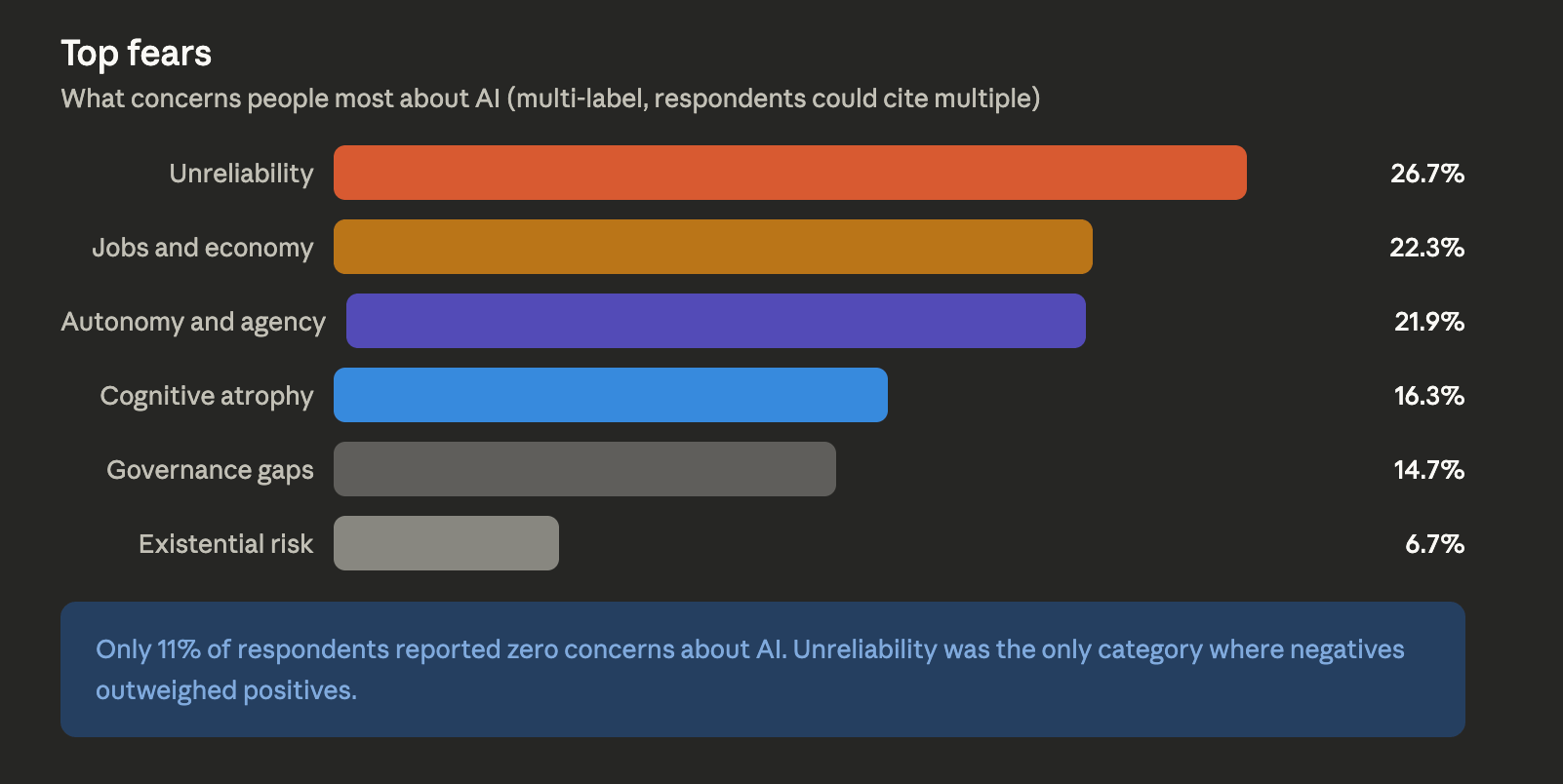

Here's where things get interesting. The number one concern wasn't job loss. It wasn't surveillance. It wasn't the robot apocalypse.

It was unreliability.

26.7% of respondents said their biggest worry is AI making incorrect decisions, hallucinating facts, or citing sources that don't exist.

One respondent from Brazil put it bluntly: hey said they had to take photos to prove the AI was wrong, and it felt like arguing with someone who refuses to admit a mistake.

Job displacement and economic impact came second at 22.3%. Loss of human autonomy and agency was right behind at 21.9%. Cognitive atrophy (the fear that relying on AI is making us worse at thinking) hit 16.3%. And concerns about governance gaps rounded out the top five at 14.7%.

Only 11% of respondents said they had zero fears about AI. The other 89% had plenty to say.

What People Actually Want

On the hopes side, professional excellence topped the list at 18.8%, people want AI to handle the routine stuff so they can focus on higher-value work. Personal transformation came next at 13.7%, followed by life management at 13.5% and time freedom at 11.1%.

But here's the layer underneath those numbers. When the Anthropic Interviewer pushed deeper on what people really meant by “productivity,” the answers shifted. It wasn't about doing more work. It was about having more life outside of work. One respondent in Colombia said AI efficiency at work meant they could cook with their mother instead of finishing tasks. A freelancer in Japan said they wanted to spend less brain power on client problems so they could read more books.

81% of respondents said AI had already taken meaningful steps toward their goals. The most common realized benefit was productivity, reported by 32%.

The Light and Shade Problem

Anthropic calls it “light and shade.” The things people love most about AI are often the same things they fear. People value AI for emotional support but are three times more likely to worry about becoming dependent on it. They praise AI for helping them learn but fear it's eroding their ability to think independently. They celebrate time savings but wonder if AI is just raising expectations and adding more work.

This tension was especially visible in education. 33% of respondents mentioned learning benefits from AI. But 17% raised concerns about cognitive atrophy. Among teachers specifically, that atrophy concern jumped to 24%.

The Global Picture

Sentiment toward AI was majority-positive everywhere. No country dipped below 60% favorable. But the spread was notable.

South America, Africa, and much of Asia viewed AI with more optimism. People in these regions tended to see AI as an economic ladder, a way to leapfrog traditional barriers and access global opportunities. Concerns about jobs and the economy were lower in these areas.

The U.S. and Western Europe were more skeptical. East Asia had a distinct profile. Countries like Japan and South Korea weren't as worried about governance or surveillance. Instead, their standout concerns were cognitive atrophy and loss of meaning. The West worries about who controls AI. East Asia worries about what AI does to the person using it.

Wealthier, more AI-exposed regions tended to want AI to manage the complexity of life. Developing regions wanted AI to create opportunity.

Why This Study Matters Beyond the Data

The data is useful. But the method might be the bigger story.

A year ago, running 80,000 in-depth qualitative interviews across 70 languages in a single week was not possible. Traditional qualitative research requires trained human interviewers, scheduling, transcription, and analysis. It's slow and expensive, which is why most attitude studies default to multiple-choice surveys that capture breadth but miss depth.

Anthropic essentially used AI to understand attitudes about AI. And it worked. Participants rated their satisfaction with the interview process overwhelmingly high. 97.6% of pilot participants gave it a 5 or higher out of 7.

This is a proof of concept for AI as a research tool at a scale that simply didn't exist before. Customer research, employee feedback, policy analysis, market studies, the implications go well beyond this one report.

The Caveats

Anthropic was transparent about limitations. The sample is entirely Claude users, which skews toward early adopters who already find value in AI. Nearly half of respondents came from North America and Western Europe. The interview asked about positive visions before concerns, which may have primed responses. And the AI classification system, while impressive, introduces its own interpretive layer.

This isn't a representative snapshot of global opinion. It's a detailed look at how active AI users think and feel. That distinction matters.

The Bottom Line

The people who actually use AI every day aren't worried about Skynet. They're worried about hallucinated citations, wrong answers they can't catch, and slowly forgetting how to do things themselves. Unreliability isn't just a technical bug. It's the single biggest barrier to trust. And trust is what determines whether AI goes from a tool people try to one they rely on. For every AI company, that 26.7% number should be circled in red.

AI PROMPT OF THE DAY

Category: Research Design

“I want to understand how [target audience] feels about [topic]. Design a qualitative interview guide with 5-7 open-ended questions that start broad and get progressively more specific. Include follow-up probes for each question. The tone should be conversational, not clinical. Format as a numbered list with sub-bullets for probes.”

ONE LAST THING

What struck me most about this study wasn't any single number. It was the gap between what people say they want (productivity) and what they actually mean when you ask a follow-up question (more time with the people they love). Most surveys never get to that second layer. This one did, because an AI kept asking why. That's either poetic or unsettling. Maybe both.

Hit reply, I read every response.

See you in the next one.

— Vivek

P.S. Know someone who wants to understand AI without the hype? They can subscribe at https://savvymonk.beehiiv.com/